www.ptreview.co.uk

03

'26

Written on Modified on

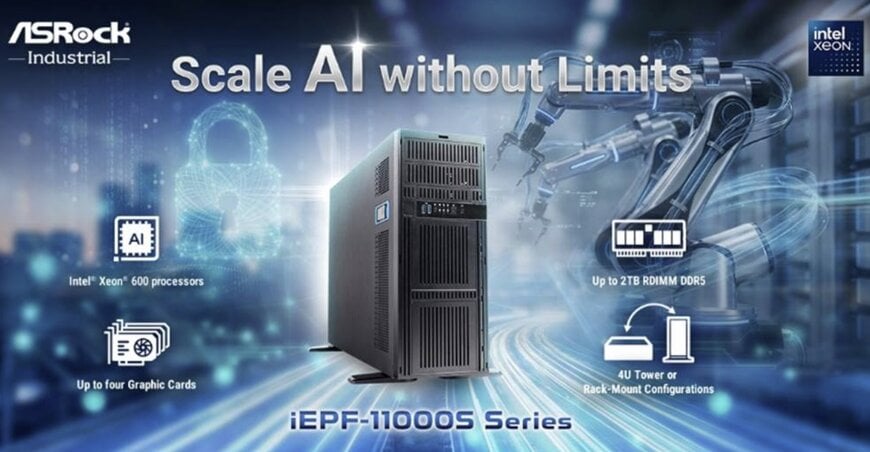

ASRock Industrial Introduces Xeon 600 Edge AI Platform

New 4U edge system targets multi-GPU AI training and inference in industrial environments, combining high memory capacity, PCIe Gen5 expansion, and remote management features.

www.asrockind.com

Edge AI workloads such as visual inspection, real-time analytics, and generative AI increasingly require data center–class compute performance outside centralized facilities. In this context, ASRock Industrial has introduced the iEPF-11000S Series, an expandable edge AIoT platform built around Intel® Xeon® 600 processors and the W890 chipset.

Quad-GPU architecture for compute-intensive edge tasks

The iEPF-11000S Series is designed for industrial AI applications that combine high CPU throughput, large memory pools, and GPU acceleration. The platform supports up to 2 TB of quad-channel DDR5 ECC RDIMM or RDIMM-3DS memory, targeting memory-intensive workloads such as AI model training, multi-model inference, predictive maintenance algorithms, and large language model (LLM) deployment at the edge.

GPU expansion is a defining feature. The system accommodates up to four discrete graphics cards, enabling parallel inference or training scenarios where multiple AI models run concurrently. This configuration supports use cases in automated optical inspection, robotics guidance, and real-time analytics pipelines that demand high compute density outside traditional data centers.

PCIe Gen5 connectivity underpins the expansion architecture, with six PCIe x16 (Gen5) and one PCIe x8 (Gen5) slots available. Gen5 bandwidth is particularly relevant for high-performance GPUs and NVMe storage devices, where data throughput can become a bottleneck in edge AI systems.

Memory, storage, and data handling at scale

For industrial automation and digital supply chain environments, system stability and data integrity are critical. The platform integrates ECC memory support and Intel® VMD RAID 0/1/5/10 across eight SATA3 ports to manage storage redundancy and performance. Additional storage options include dual M.2 Key M slots.

Networking options include dual 1 GbE LAN ports with Intel® vPro support and optional dual 10 GbE connectivity. These interfaces are intended for high-bandwidth data ingestion from cameras, sensors, or production systems, as well as integration into enterprise IT and OT networks.

The chassis, measuring 602.6 × 175 × 438 mm, supports both 4U rack-mount and tower configurations, enabling deployment in factory control rooms, edge data cabinets, or industrial server racks. A 1600 W ATX power supply is integrated to support multiple high-performance GPUs and sustained compute loads.

Security and remote administration features include TPM 2.0 and Intel® vPro, addressing common requirements for distributed industrial edge nodes that must be managed across multiple sites.

A lower-footprint alternative for inference-focused deployments

Alongside the Xeon-based system, ASRock Industrial introduced the iEPF-10000S Series, aimed at applications where space and energy efficiency are more constrained. This series is powered by Intel® Core™ Ultra processors (Arrow Lake-S) and Intel® Core™ Series 2 processors (Bartlett Lake-S) and supports up to two discrete GPUs.

The reduced GPU capacity positions the iEPF-10000S Series for machine vision, AI-enabled inspection, and industrial analytics tasks where inference performance is required but large-scale model training is not. The system architecture targets deployments in production lines or equipment-integrated scenarios with a tighter footprint and power budgets.

Remote management in distributed edge environments

Both series support out-of-band (OOB) management modules to facilitate remote monitoring and control in distributed edge AI deployments. The iEPF-11000S Series supports the AI-M2-OOB-1G module, while the iEPF-10000S Series supports the AI-OOB-LITE module.

This approach reflects a broader shift toward decentralized AI processing, where compute resources are positioned closer to data sources in manufacturing, logistics, and inspection systems. By combining multi-GPU scalability, high-capacity ECC memory, and remote management, the platforms are structured to handle AI training and inference workloads within industrial environments rather than relying solely on centralized cloud or data center infrastructure.

www.asrockind.com