www.ptreview.co.uk

16

'26

Written on Modified on

High-speed vision system for poultry feeding analysis

Allied Vision camera platform supports real-time biomechanical monitoring in precision livestock farming through AI-based computer vision and behavioural data capture.

www.alliedvision.com

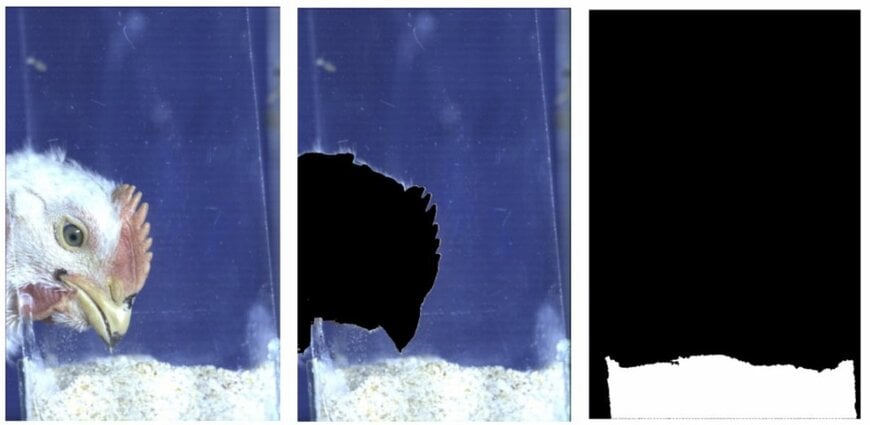

Validation of the background subtraction algorithm: (1) original frame; (2) intermediate processing removing the bird instance; (3) final binary mask of the feed ROI used for displacement tracking (photo courtesy of Paulista University)

Automated behavioural monitoring is becoming increasingly relevant in precision livestock farming, where real-time data supports production efficiency, animal welfare assessment and process optimisation. In this context, Allied Vision hardware was used in a research study demonstrating an AI-based machine vision system for analysing broiler feeding biomechanics.

Machine vision enters precision livestock farming workflows

The research, conducted by Paulista University in São Paulo, Brazil, presents an automated sensing framework designed to quantify feeding behaviour in broiler chickens. The work focuses on capturing biomechanical interaction between animals and feeding systems, an area typically not monitored in commercial poultry production despite feed accounting for up to 70 % of production costs.

Traditional monitoring approaches such as invasive markers, manual annotation or retrospective growth analysis do not provide the continuous behavioural datasets required in cyber-physical agricultural systems. The study instead proposes an automated sensing approach capable of generating data streams suitable for digital twin modelling of poultry production processes.

Optical setup designed for high-speed kinematic capture

The experimental setup was built around an Allied Vision (formerly Mikrotron) EoSens™ CoaXPress high-speed industrial camera equipped with a Nikon 50 mm f/1.4 lens. The camera was positioned between 1.0 m and 1.5 m from the feeder to capture lateral motion profiles of feeding behaviour.

Lighting conditions were standardised using a 500 W LED light source at 6500 K, delivering illumination levels between 3000 and 5000 lux to improve image signal quality.

Operating at 300 frames per second, the system provided sufficient temporal resolution to capture rapid beak motion not detectable using conventional video systems. Spatial calibration was performed using a physical reference scale positioned at feeder height.

Validation testing used a biological dataset of nine broiler chickens distributed across three growth stages to capture representative variation across the production cycle.

AI-based detection and segmentation pipeline

Image data from the high-speed camera was processed through a three-stage computer vision pipeline implemented in Python 3.10. The architecture used PyTorch 2.10.0 for deep learning inference and OpenCV 4.13.0.92 for image preprocessing.

The workflow combined YOLOv8 object detection with the Segment Anything Model (SAM) developed by Meta AI to perform anatomical segmentation and behavioural tracking. This hybrid architecture enabled object detection and motion tracking under farm conditions including variable lighting and partial occlusions.

System inference was tested on a workstation equipped with an Intel Core Ultra 9 275HX processor, 64 GB DDR5-6400 memory and an NVIDIA GeForce RTX 5070 GPU operating under Windows 11 Pro. The system achieved a measured detection precision of 0.95, demonstrating performance levels suitable for automated behavioural analysis without manual annotation.

Linking feed structure to feeding biomechanics

Analysis of the recorded data identified a measurable relationship between feed particle size and feeding biomechanics. Coarser feed particles resulted in larger beak opening amplitudes and improved ingestion efficiency.

This establishes a direct measurable link between feed granulometry and biomechanical effort during feeding, providing a potential optimisation parameter for precision nutrition strategies. Previous studies had suggested this relationship through physiological observations, but the present work demonstrates real-time measurement capability.

Data foundation for poultry digital twins

The system architecture also enables combined monitoring of production performance and animal welfare indicators through metrics such as the Beak Efficiency Index (BEI). This creates a potential data foundation for digital poultry production models used in smart farming environments.

The framework enables continuous behavioural monitoring aligned with the requirements of precision livestock farming platforms and digital supply chain traceability systems in agriculture.

Further development is expected to focus on Edge AI deployment and validation under commercial poultry house conditions, particularly in environments with higher levels of occlusion and lighting variability.

Edited by industrial journalist, Aishwarya Mambet — AI-powered.

www.alliedvision.com

Automated behavioural monitoring is becoming increasingly relevant in precision livestock farming, where real-time data supports production efficiency, animal welfare assessment and process optimisation. In this context, Allied Vision hardware was used in a research study demonstrating an AI-based machine vision system for analysing broiler feeding biomechanics.

Machine vision enters precision livestock farming workflows

The research, conducted by Paulista University in São Paulo, Brazil, presents an automated sensing framework designed to quantify feeding behaviour in broiler chickens. The work focuses on capturing biomechanical interaction between animals and feeding systems, an area typically not monitored in commercial poultry production despite feed accounting for up to 70 % of production costs.

Traditional monitoring approaches such as invasive markers, manual annotation or retrospective growth analysis do not provide the continuous behavioural datasets required in cyber-physical agricultural systems. The study instead proposes an automated sensing approach capable of generating data streams suitable for digital twin modelling of poultry production processes.

Optical setup designed for high-speed kinematic capture

The experimental setup was built around an Allied Vision (formerly Mikrotron) EoSens™ CoaXPress high-speed industrial camera equipped with a Nikon 50 mm f/1.4 lens. The camera was positioned between 1.0 m and 1.5 m from the feeder to capture lateral motion profiles of feeding behaviour.

Lighting conditions were standardised using a 500 W LED light source at 6500 K, delivering illumination levels between 3000 and 5000 lux to improve image signal quality.

Operating at 300 frames per second, the system provided sufficient temporal resolution to capture rapid beak motion not detectable using conventional video systems. Spatial calibration was performed using a physical reference scale positioned at feeder height.

Validation testing used a biological dataset of nine broiler chickens distributed across three growth stages to capture representative variation across the production cycle.

AI-based detection and segmentation pipeline

Image data from the high-speed camera was processed through a three-stage computer vision pipeline implemented in Python 3.10. The architecture used PyTorch 2.10.0 for deep learning inference and OpenCV 4.13.0.92 for image preprocessing.

The workflow combined YOLOv8 object detection with the Segment Anything Model (SAM) developed by Meta AI to perform anatomical segmentation and behavioural tracking. This hybrid architecture enabled object detection and motion tracking under farm conditions including variable lighting and partial occlusions.

System inference was tested on a workstation equipped with an Intel Core Ultra 9 275HX processor, 64 GB DDR5-6400 memory and an NVIDIA GeForce RTX 5070 GPU operating under Windows 11 Pro. The system achieved a measured detection precision of 0.95, demonstrating performance levels suitable for automated behavioural analysis without manual annotation.

Linking feed structure to feeding biomechanics

Analysis of the recorded data identified a measurable relationship between feed particle size and feeding biomechanics. Coarser feed particles resulted in larger beak opening amplitudes and improved ingestion efficiency.

This establishes a direct measurable link between feed granulometry and biomechanical effort during feeding, providing a potential optimisation parameter for precision nutrition strategies. Previous studies had suggested this relationship through physiological observations, but the present work demonstrates real-time measurement capability.

Data foundation for poultry digital twins

The system architecture also enables combined monitoring of production performance and animal welfare indicators through metrics such as the Beak Efficiency Index (BEI). This creates a potential data foundation for digital poultry production models used in smart farming environments.

The framework enables continuous behavioural monitoring aligned with the requirements of precision livestock farming platforms and digital supply chain traceability systems in agriculture.

Further development is expected to focus on Edge AI deployment and validation under commercial poultry house conditions, particularly in environments with higher levels of occlusion and lighting variability.

Edited by industrial journalist, Aishwarya Mambet — AI-powered.

www.alliedvision.com