www.ptreview.co.uk

17

'26

Written on Modified on

AI Trainer Enables Data-Driven Robotics

Universal Robots introduces AI Trainer platform to support real-world training of physical AI systems using human-guided data capture.

www.universal-robots.com

Universal Robots has unveiled its AI Trainer platform at GTC 2026, marking a shift toward AI-driven robotics where systems learn tasks through data rather than pre-programmed instructions. Developed in collaboration with Scale AI, the platform enables the collection of high-quality datasets directly from industrial robots.

The solution is designed to bridge the gap between AI research and real-world deployment by allowing robots to be trained using the same hardware used in production environments.

Leader–Follower Training for Real-World Tasks

At the core of the AI Trainer is a leader–follower setup. Human operators guide a “leader” robot through a task, while a synchronized “follower” robot replicates the motion in real time.

During this process, the system captures multimodal data including motion, force feedback and visual inputs. These synchronized datasets are used to train advanced AI models, such as Vision-Language-Action (VLA) systems, enabling robots to learn complex and contact-rich tasks.

This approach allows robots to imitate human actions and adapt to dynamic environments, supporting more flexible automation.

High-Fidelity Data with Force Feedback

A key challenge in robotics AI development is the lack of high-quality training data. Many systems rely primarily on visual data or are trained on non-industrial robots, limiting their applicability in production.

The AI Trainer addresses this by leveraging direct torque control and force feedback, allowing developers to capture detailed physical interaction data. This enables robots to learn tasks that involve touch, pressure or delicate manipulation.

By collecting data directly on industrial-grade robots, the platform ensures that trained models can be deployed more reliably in real-world applications.

Continuous Learning Through Data Integration

The platform integrates with Universal Robots’ AI Accelerator and Scale AI’s data infrastructure to create a continuous data loop. Data collected during operation can be used to refine models, which can then be redeployed to improve performance over time.

This “data flywheel” approach supports ongoing optimization of robotic systems and enables faster iteration cycles in AI development.

As part of the collaboration, the companies plan to release a large-scale industrial dataset collected from Universal Robots systems.

Simulation and Synthetic Data Generation

In addition to physical data capture, Universal Robots is exploring simulation-based training using tools from NVIDIA.

Using NVIDIA Omniverse and Isaac Sim, developers can train robots in physics-based virtual environments. These simulations allow for safe testing of complex scenarios and generation of synthetic data at scale.

The company is also evaluating NVIDIA’s Physical AI Data Factory approach to automate data generation and accelerate training workflows.

Toward General-Purpose Robotics

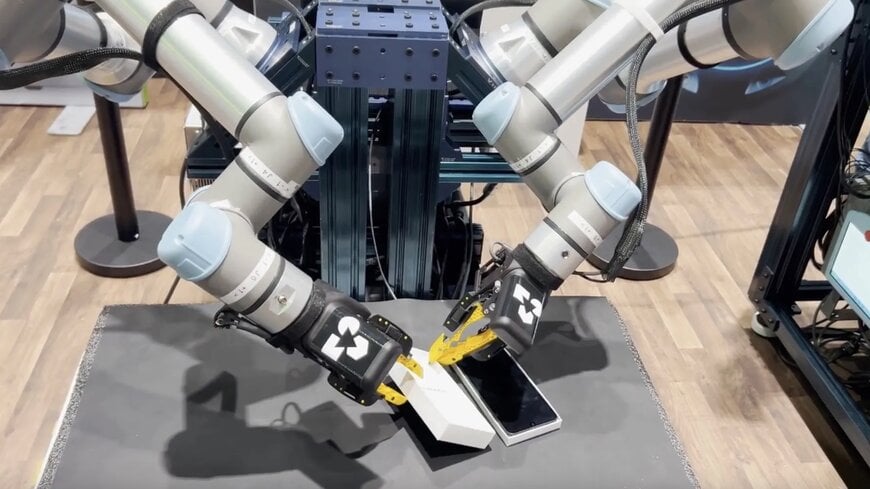

At the event, Universal Robots also demonstrated a robotic foundation model developed by Generalist AI. In the demonstration, two robots autonomously performed a smartphone packaging task, showcasing coordinated movement and precise manipulation.

The combination of high-quality data capture, simulation and foundation models reflects a broader industry trend toward general-purpose robots capable of perceiving, reasoning and learning from experience.

By enabling scalable data-driven training, the AI Trainer platform aims to accelerate the transition from rigid automation to adaptive, intelligent robotic systems.

Edited by Industrial Journalist, Romila DSilva, with AI assistance.

www.universal-robots.com